Meta released Llama3 at April 18, which is evaluated on several benchmarks and achieves the SOTA on open-sourced LLMs.

Introduction

Instruct model performance

The performance of Llama3 8B compared with Gemma and Mistral:

| Model | Llama3 8B | Gemma 7B - It | Mistral &B Instruct |

|---|---|---|---|

| MMLU (5 shot) | 68.4 | 53.3 | 58.4 |

| GPQA (0 shot) | 34.2 | 21.4 | 26.3 |

| HumanEval (0 shot) | 62.2 | 30.5 | 36.6 |

| GSM-8K (8 shot, CoT) | 79.6 | 30.6 | 39.9 |

| MATH (4 shot, CoT) | 30.0 | 12.2 | 11.0 |

performance of Llama3 70B compared with Gemini Pro 1.5 and Claude Sonnet:

| Model | Llama3 70B | Gemini Pro 1.5 (Published) | Claude 3 Sonnet (Published) |

|---|---|---|---|

| MMLU (5 shot) | 82.0 | 81.9 | 79.0 |

| GPQA (0 shot) | 39.5 | 41.5 (CoT) | 38.5 (CoT) |

| HumanEval (0 shot) | 81.7 | 71.9 | 73.0 |

| GSM-8K (8 shot, CoT) | 93.0 | 91.7 (11 shot) | 92.3 (0 shot) |

| MATH (4 shot, CoT) | 50.4 | 58.5 (Minerva prompt) | 40.5 |

Pre-trained model performance

The performance of Llama3 8B compared with Gemma and Mistral:

| Model | Llama3 8B | Gemma 7B (Published) | Gemma 7B (Measured) | Mistral 7B (Published) | Mistral 7B (Measured) |

|---|---|---|---|---|---|

| MMLU (5 shot) | 66.6 | 64.3 | 64.4 | 62.5 | 63.9 |

| AGIEval English (3-5 shot) | 45.9 | 41.7 | 44.9 | - | 44.0 |

| BIG-Bench Hard (3 shot, CoT) | 61.1 | 55.1 | 59.0 | - | 56.0 |

| ARC-Challenge (25 shot) | 78.6 | 53.2(0 shot) | 79.1 | 78.1 | 78.7 |

| DROP (3 shot, F1) | 58.4 | - | 56.3 | - | 54.4 |

performance of Llama3 70B compared with Gemini Pro 1.5 and Claude Sonnet:

| Model | Llama3 70B | Gemini Pro 1.0 (Published) | Mixtral 8 22B (Measured) |

|---|---|---|---|

| MMLU (5-shot) | 79.5 | 71.8 | 77.7 |

| AGIEval English (3-5 shot) | 63.0 | - | 61.2 |

| BIG-Bench Hard (3 shot, CoT) | 81.3 | 75.0 | 79.2 |

| ARC-Challenge (25 shot) | 93.0 | - | 90.7 |

| DROP (3 shot, F1) | 79.7 | 74.1 (variable shot) | 77.6 |

Model Architecture

Several improvements are made on Llama3 compared to llama2:

- Llama3 uses a tokenizer with a vocabulary of 128K tokens.

- Llama3 adopts grouped query attention (GQA) across both the 8B and 70B sizes.

- Llama3 uses to context window of size 8192 tokens

Traning

Llama3 uses 15T tokens for pre-training. Compares to Llama2, it is seven times larger and includes four times more code.

5% data of the training dataset are non-English to support multi-lingual use cases.

Data processing includes:

- Heuristic filters

- NSFW filters

- Semantic deduplication approaches

- Text classifiers to predict data quality. Llama2 is used to generate training data for the text classifiers.

Data mixing strategy is explored to improve the performance of Llama3.

Scaling up pretraining

Llama3 developed a series of scaling laws for downstream benchmark evaluations.

Scaling laws help:

- Select an optimal data mix and to make informed decisions on how to best use training compute.

- Scaling laws allow Llama3 to predict the performance of the largest models on key tasks without training the models.

The authors finds our that the performance of the model continues to improve log-linearly as the training tokens increase. It is seen that Larger models can match the performance of these smaller models with less training compute, but smaller models are generally preferred because they are much more efficient during inference.

The authors combine three types of parallelization:

- Data parallelization

- Model parallelization

- Pipeline parallelization

Instruction fine-tuning

The fine-tuning of Llama3 contains:

- Supervised fine-tuning

- Rejection sampling

- Proximal Policy Optimization

- Direct Preference Optimization

Learning from perference rankings via PPO and DPO also greatly improved the performance of LLma3 on reasoning and coding tasks. Since perference ranking helps the model to select answer when it is in a dilemma.

Reference

介绍

Meta在2025年4月10号发布了LLaMA4系列,包含三个模型:Llama 4 Scout, Llama 4 Maverick 以及Llama 4 Behemoth, 三个模型都基于MoE架构,且支持多模态

| Model | Layers | Heads (Q / KV) | Context Length | #Parameters (activated/total) | #Experts (activated/total) | #Tokens |

|---|---|---|---|---|---|---|

| LLaMA 4 Behemoth | - | - | - | 288B / 2T | (1 shared + 1 routed) / 16 | - |

| LLaMA 4 Maverick | 48 | 40/8 | 1M | 17B / 109B | (1 shared + 1 routed) / 128 | ~22T |

| LLaMA 4 Scout | 48 | 40/8 | 10M | 17B / 400B | (1 shared + 1 routed) / 16 | ~40T |

训练数据截止到2024年8月。LLaMa4支持200多种语言,其中100多种语言的训练token数超过了1B

亮点

- 原生多模态 LLaMA 4是一个原生多模态架构

- 超长上下文 LLaMA 4的上下文超过了1M

- iRoPE 通过交替dense和MoE MLP来提高整体推理效率

- 基于MetaCLIP的vision encoder

- MetaP 使用MetaP来调整超参数

- FP8精度训练

Pre-training

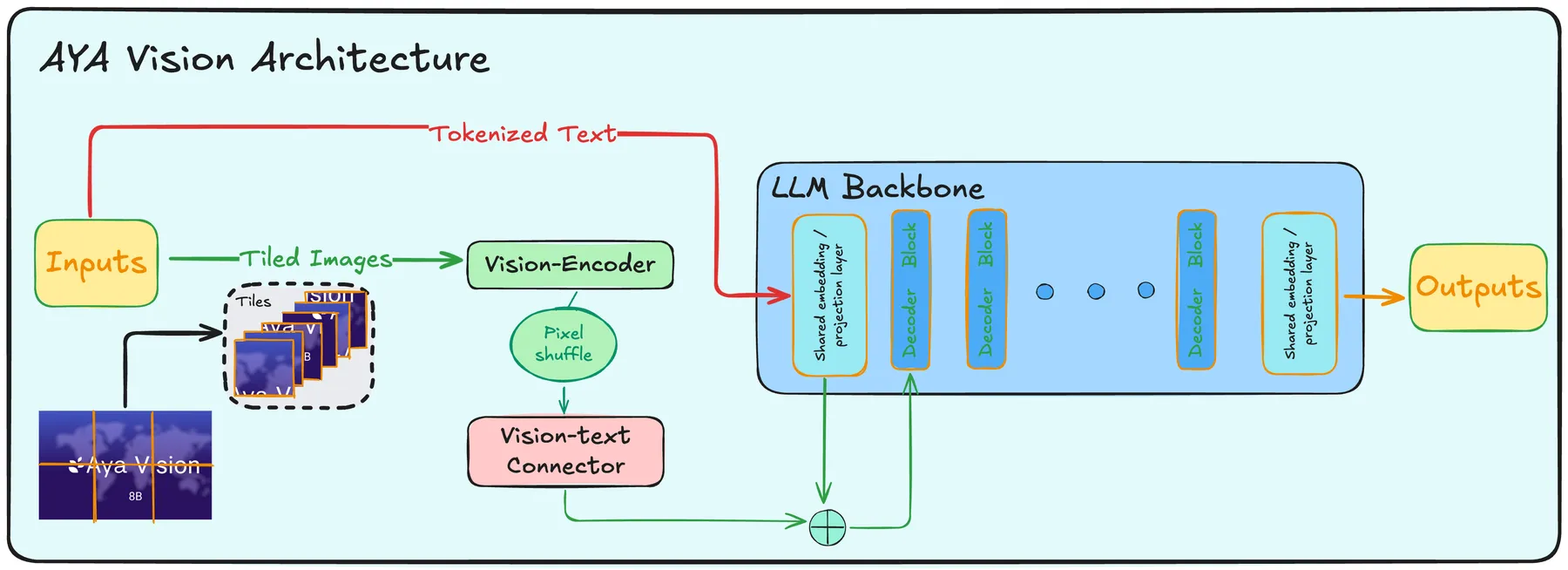

LLaMA 4 仍然是一个基于transformer的架构,但是引入了MoE,其示意图如下所示

MoE架构中包含1个shared expert以及1个routed expert. 并且,与其他LLM不同,LLaMA 4使用了一个交替MLP个MoE的架构,即iRoPE,即特定的transformer layer是MoE架构,其余的是MLP架构,其核心代码如下:

self.is_moe_layer = layer_idx in config.moe_layers

if self.is_moe_layer: # the 128E model interleaves dense / sparse

self.feed_forward = Llama4TextMoe(config)

else:

self.feed_forward = Llama4TextMLP(config, intermediate_size=config.intermediate_size_mlp)

early fusion. LLaMA 4称其一个原生多模态大模型,但是其架构仍然是 Vision Encoder-MLP-LLM 的形式,其不同点在于patch embedding没有使用convolution, 而是使用 nn.Unfold直接进行展平,然后使用一个线性层与vision encoder进行对齐。代码如下

class Llama4UnfoldConvolution(nn.Module):

def __init__(self, config):

super().__init__()

kernel_size = config.patch_size

if isinstance(kernel_size, int):

kernel_size = (kernel_size, kernel_size)

self.unfold = torch.nn.Unfold(kernel_size=kernel_size, stride=config.patch_size)

self.linear = nn.Linear(

config.num_channels * kernel_size[0] * kernel_size[1],

config.hidden_size,

bias=False,

)

def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

hidden_states = self.unfold(hidden_states)

hidden_states = hidden_states.permute(0, 2, 1)

hidden_states = self.linear(hidden_states)

return hidden_states

其他训练优化技巧如下:

- MetaP:用于选择超参数

- FP8 precision:与 DeepSeek-V3 一样,使用FP8精度进行训练

- mid-training:在预训练阶段之后,额外增加了一个训练阶段,来提高模型的长上下文等关键能力

Post-training

post-training包括三个阶段:

- SFT

- online RL

- DPO

作者发现SFT和DPO会限制模型的探索能力,特别是在math, coding等domain。为了解决这个问题,作者使用LlaMA对问题进行难度分级,然后移除了50%的简单数据。

在online RL阶段,作者设计了一个continuous online RL策略,让模型在训练和筛选问题两种模式之间进行切换,以平衡效率和准确率。

DPO的目的是为了提升模型输出的质量

评测

| benchmark | LLaMA 4 Maverick | LLaMA 4 Maverick | LLaMA 4 Scout | Gemeni 2.0 Flash | GPT-4o |

|---|---|---|---|---|---|

| MMMU | 76.1 | 73.4 | 69.4 | 71.7 | 69.1 |

| Math Vista | 73.7 | 70.7 | 73.1 | 63.8 | |

| ChartQA | - | 90.0 | 88.8 | 88.3 | 85.7 |

| DocVQA | - | 94.4 | 94.4 | - | 92.8 |

| LiveCodeBench | 49.4 | 43.4 | 32.8 | 34.5 | 32.3 |

| MMLU Pro | 82.2 | 80.5 | 74.3 | 77.6 | - |

| GPQA Diamond | 73.7 | 69.8 | 57.2 | 60.1 | 53.6 |

结论

LLaMA 4 采用了MoE架构,是一个原生的多模态大模型系列。在架构上,与DeepSeek-MoE, aria和OLMoE不同,LLaMA4并没有增加expert granularity,OLMoE分析认为,增加granularity可以提高模型的flexibility, 下面总结了一下相关模型的参数

| Model | Layers | Heads (Q / KV) | Context Length | #Parameters (activated/total) | #Experts (activated/total) | #Tokens |

|---|---|---|---|---|---|---|

| DeepSeek-MoE(144.6B) | 62 | 32/32 | 2048 | 22.2B/144.6B | (1 shared + 7 routed)/64 | 245B |

| DeepSeek-V3 | 61 | 128(MLA) | 128K | 37B/671B | (1 shared + 8 routed)/257 | 14.8T |

| Aria | 28 | 20/20 | 64K | 3.5B/24.9B | (2 shared+ 6 routed)/66 | 6.4T(text) |

| OLMoE | 16 | 16/16 | 4096 | 1.3B/6.9B | 8/64 | 5T |

| LLaMA 4 Maverick | 48 | 40/8 | 1M | 17B / 109B | (1 shared + 1 routed) / 128 | ~22T |

| LLaMA 4 Scout | 48 | 40/8 | 10M | 17B / 400B | (1 shared + 1 routed) / 16 | ~40T |